Lightelligence Targets AI Bottlenecks with Photonics

Sarab Chopra, Editor of PIC Magazine, speaks with Maurice (Mo) Steinman, Vice President of Engineering at Lightelligence, about how photonic technologies are addressing key performance bottlenecks in AI systems.

The discussion explores the growing limitations of traditional interconnects in large-scale AI clusters, and how optical networking and distributed architectures can improve bandwidth, latency and system utilisation. Steinman also outlines the role of Lightelligence’s photonic computing platform in accelerating core AI operations, as well as the company’s broader strategy spanning both compute and interconnect. The conversation further highlights the challenges and opportunities in scaling photonics across data centres, including the transition from copper to optical links and the path toward co-packaged optics (CPO).

SC: Why is interconnect becoming the main bottleneck in AI systems?

MS: AI workloads typically involve a large memory footprint and high computational demand, exceeding the capabilities of a single system node. To meet the overall demand, many nodes must be aggregated into clusters which perform like individual large nodes. This requires robust and high-performance (reliable, low-latency, high bandwidth) data interconnection networks for information to flow among nodes, ensuring maximum utilisation of the compute resources at each node. With each node operating at or near its maximum utilisation, the entire cluster can scale to meet the workload requirement.

As the physical footprint of the cluster grows, the data interconnection network must be able to span the distance between nodes without sacrificing performance while still maintaining robustness and power efficiency. Optical data interconnects provide the reach that is unattainable by purely electronic counterparts, enabling efficient scaling to extremely large clusters.

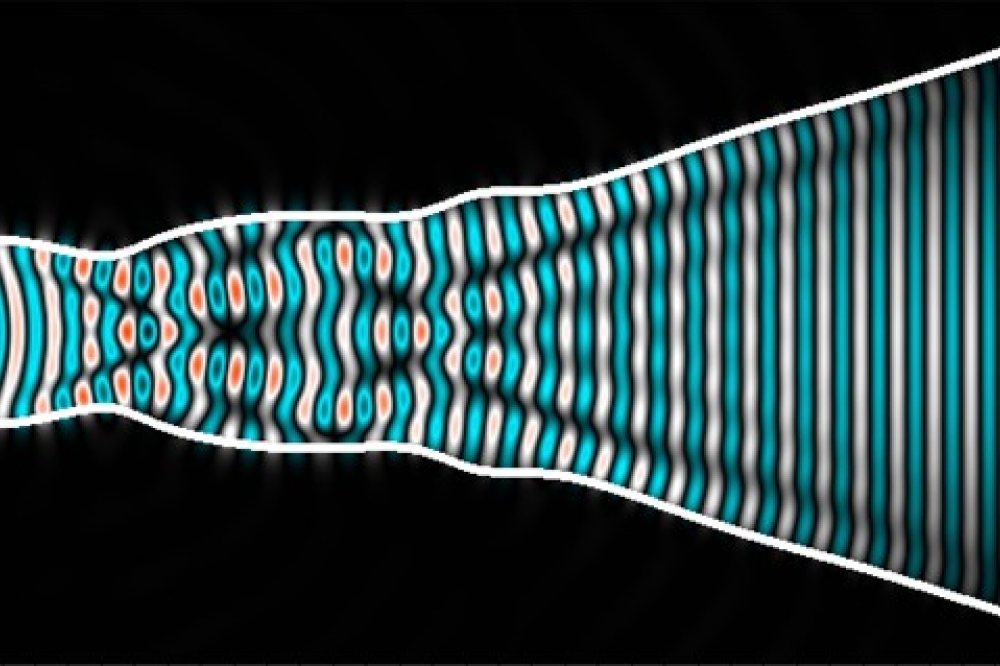

SC: How does PACE2 improve compute efficiency with photonics?

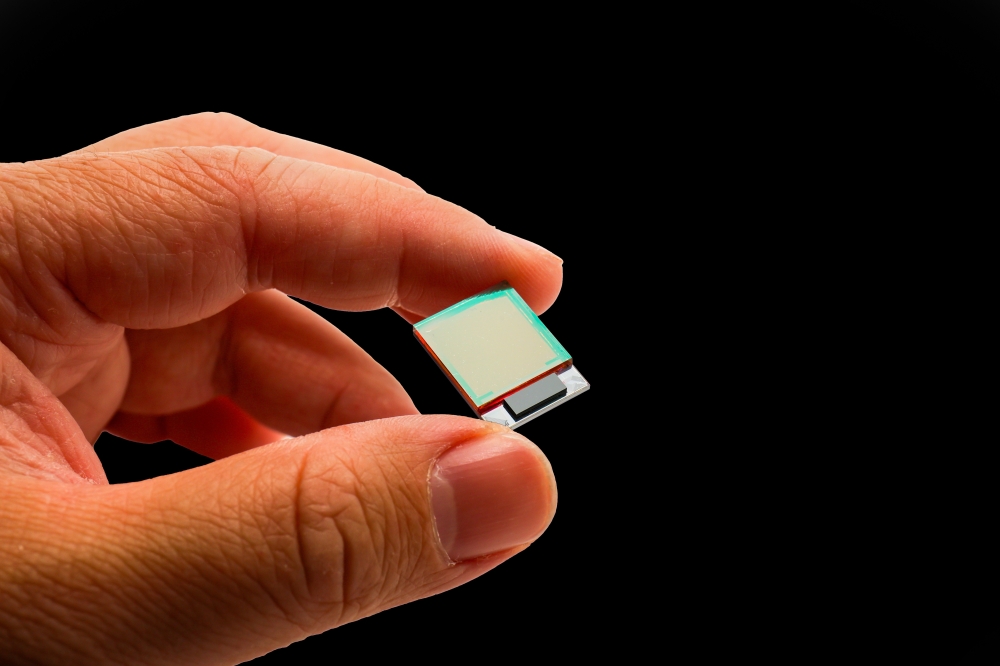

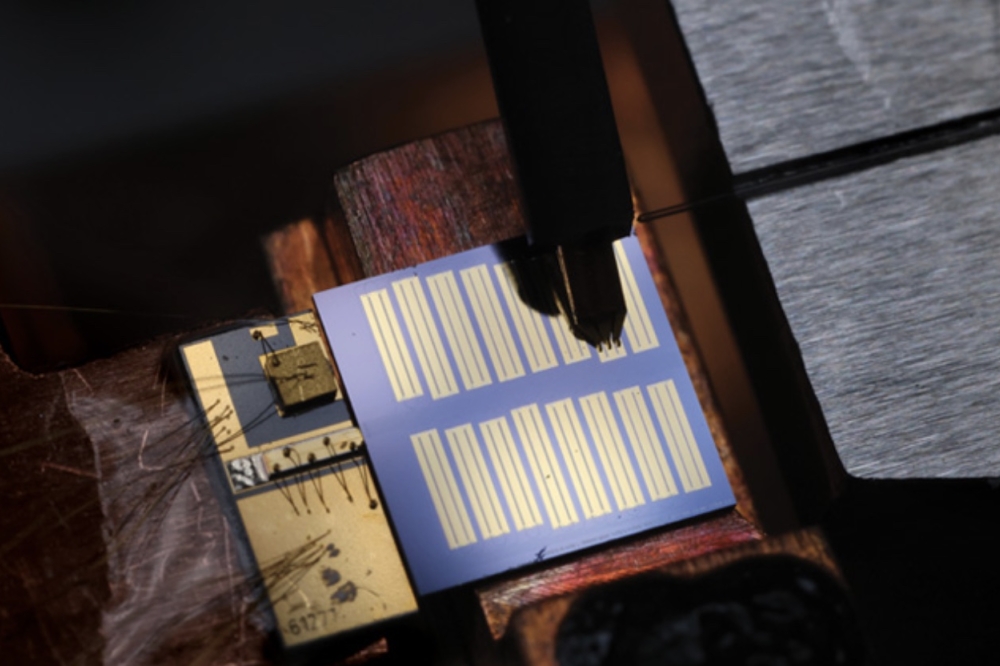

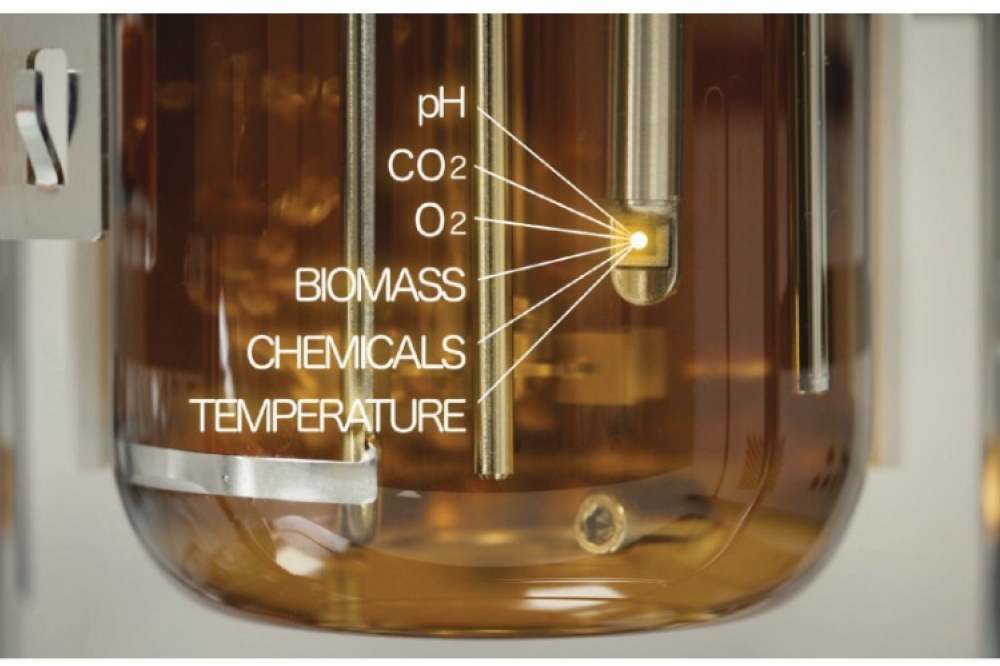

MS: PACE2 is Lightelligence’s second generation of photonic computing platform. Like its predecessor, it features a photonic processor that accelerates linear operations such as vector-matrix multiplication. PACE2 completes these operations power-efficiently at extremely low latency, as the photonic compute engine operates without the need for pipeline stages. Vector-matrix multiplication is a cornerstone of most AI workloads and PACE2 is built with programmability and developer-friendly features to facilitate algorithm and workload exploration to exploit the speed and efficiency of PACE2.

SC: How does dOCS boost utilisation and cluster flexibility?

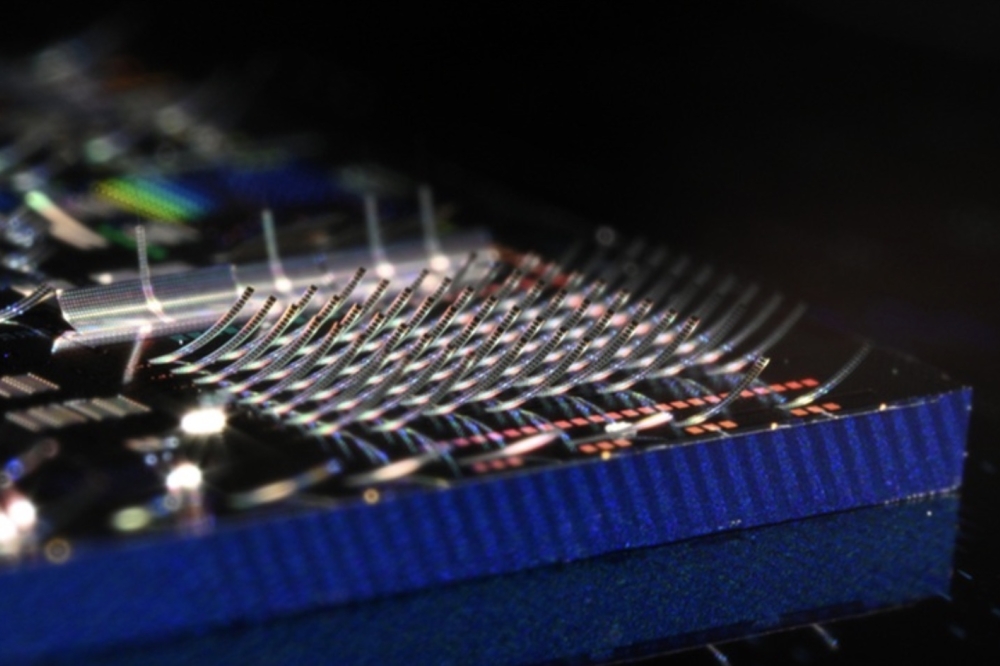

MS: Distributed Optical Circuit Switching (dOCS) integrates topology reconfiguration capability directly into the scale-up network. This enables customers to reconfigure the cluster topology to match workload parallelism requirements. Additionally, by distributing the circuit switching function directly in the optical interconnect comprising the scale-up network, the single point of failure and large blast radius typically associated with a centralised switch architecture is avoided, thus enabling greater cluster availability and reducing checkpointing costs.

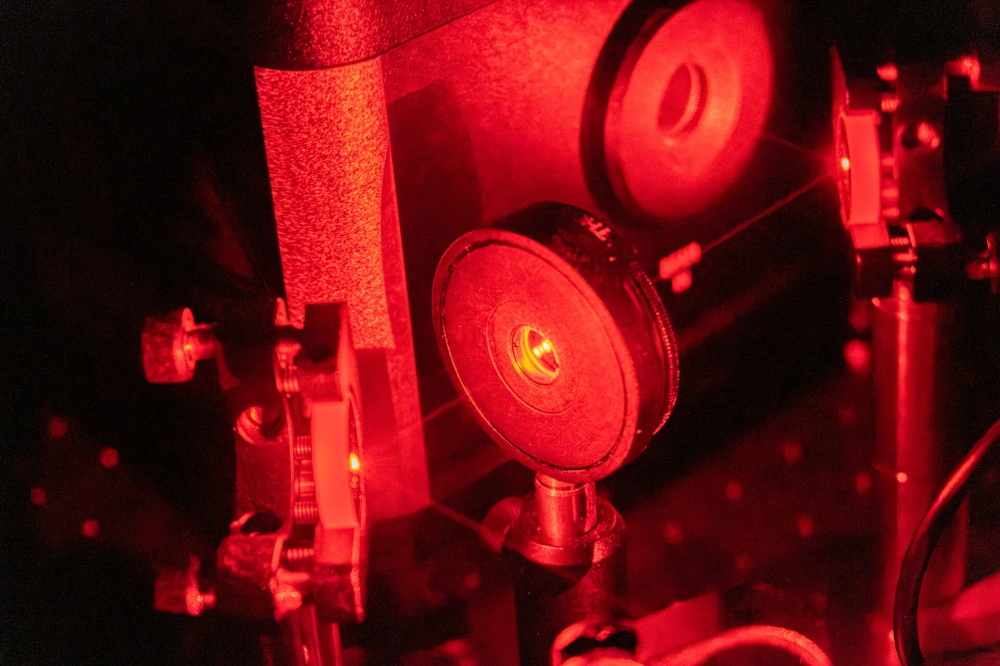

SC: What makes your photonic solutions production-ready?

MS: Lightelligence’s engineering and product teams have been developing photonic solutions since 2017. Through iteration, continuous improvement, and a commitment to hardware/software co-design philosophy, our photonic solutions have increased in performance, scalability, power efficiency and reliability each generation.

Today, we are deploying large configurations at customers and data centres. Together with our manufacturing partners, we have delivered at scale.

SC: How do you stand out from other photonic compute solutions?

MS: Unlike other players in the ecosystem, Lightelligence provides both photonic compute products to accelerate single node processing capability as well as optical interconnect fabric products to scale performance beyond the single node. Covering both of these domains gives our team a unique insight compared to other component manufacturers, resulting in deeper customer partnerships.

SC: What are the main challenges and opportunities for scaling photonics in AI data centers?

MS: On the photonic computing side, continued algorithm and workload exploration is required before we see broad adoption in the data center. Given the rise in popularity of generative AI workloads and the expectation of continued innovation, we have developed PACE2 with the developer in mind to facilitate workload and algorithm exploration to ensure we are well-positioned for this opportunity.

On the interconnect fabric side, the primary challenge is to overcome the perceived benefits (cost, reliability, power) of incumbent copper interconnect solutions. Fortunately, as interconnect speeds and distances increase, the need for optical solutions is accordingly increasing. The industry is innovating with alternative form factors to manage the cost and complexity of the transition to fully optical interconnect culminating at Co-Package Optics (CPO). Using technologies such as Linear Pluggable Optics (LPO) and Near Package Optics (NPO), adoption of optical interconnect technology is increasing today ahead of widescale CPO adoption which will take some time. We are delivering our customers’ needs today and developing the technologies we anticipate they will need tomorrow.