Mellanox announces 200Gb/s HDR InfiniBand Solutions to enable record performance and scalability levels

ConnectX-6 Adapters, Quantum Switches and LinkX cables to offer complete end-to-end 200G HDR InfiniBand infrastructure and accelerate next generation data centres

Mellanox Technologies, a supplier of end-to-end interconnect solutions for data centre servers and storage systems, has announced the world's first 200Gb/s data centre interconnect solutions. Mellanox ConnectX-6 adapters, Quantum switches and LinkX cables and transceivers together offer a complete 200Gb/s HDR InfiniBand interconnect infrastructure for the next generation of high performance computing, machine learning, big data, cloud, web 2.0 and storage platforms. These 200Gb/s HDR InfiniBand solutions are designed to enable customers and users to leverage an open, standards-based technology that maximise application performance and scalability while minimising overall data centre total cost of ownership.

"The ability to effectively utilise the exponential growth of data and to leverage data insights to gain that competitive advantage in real time is key for business success, homeland security, technology innovation, new research capabilities and beyond. The network is a critical enabler in today's system designs that will propel the most demanding applications and drive the next life-changing discoveries," said Eyal Waldman, president and CEO of Mellanox Technologies. "Mellanox is proud to announce the new 200Gb/s HDR InfiniBand solutions that will deliver the world's highest data speeds and intelligent interconnect and empower the world of data in which we live. HDR InfiniBand sets a new level of performance and scalability records while delivering the next-generation of interconnects needs to our customers and partners."

"Ten years ago, when Intersect360 Research began its business tracking the HPC market, InfiniBand had just become the predominant high-performance interconnect option for clusters, with Mellanox as the leading provider," said Addison Snell, CEO of Intersect360 Research. "Over time, InfiniBand continued to grow, and today it is the leading high-performance storage interconnect for HPC systems as well. This is at a time when high data rate applications like analytics and machine learning are expanding rapidly, increasing the need for high-bandwidth, low-latency interconnects into even more markets. HDR InfiniBand is a big leap forward and Mellanox is making it a reality at a great time."

"The leadership scale science and data analytics problems we are working to solve today and in the near future require very high bandwidth linking compute nodes, storage, and analytics systems into a single problem solving environment," said Arthur Bland, OLCF Project Director, Oak Ridge National Laboratory. "With HDR InfiniBand technology, we will have an open solution that allows us to link all of our systems at very high bandwidth."

"Data movement throughout the system is a critical aspect of current and future systems. Open network technology will be a key consideration as we plan the next generation of large-scale systems, including ones that will achieve Exascale performance," said Bronis de Supinski, chief technology officer in Livermore Computing. "HDR InfiniBand solutions represent an important development in this technology space."

"We are excited to see Mellanox continue leadership in high speed interconnects," said Parks Fields, SSI team lead HPC-design at the Los Alamos National Laboratory. "HDR InfiniBand will provide us with the performance capabilities needed for our applications."

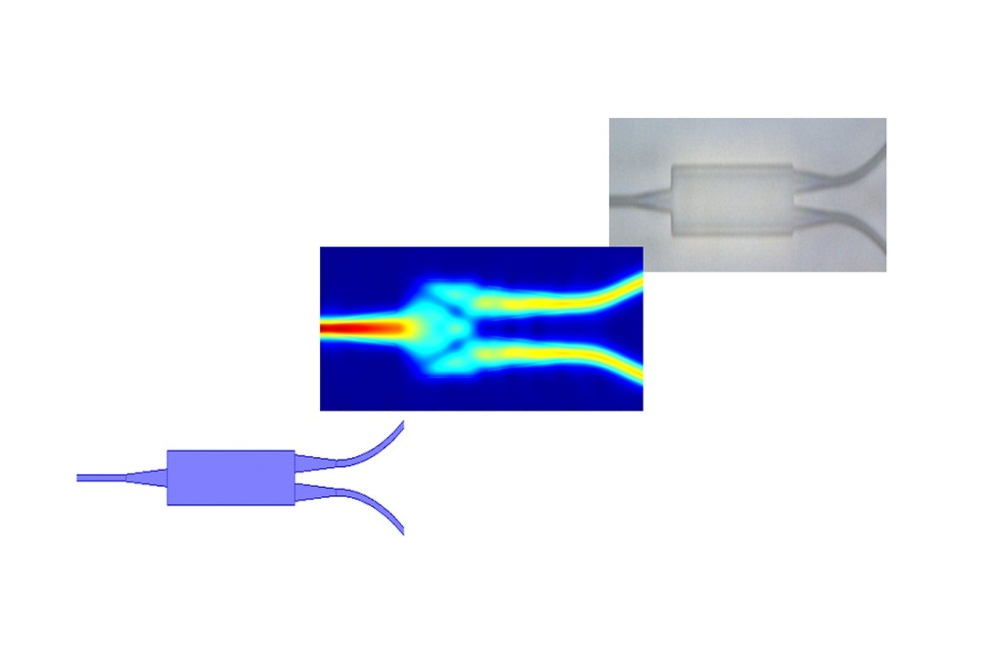

To complete the end-to-end 200Gb/s InfiniBand infrastructure, Mellanox LinkX solutions will offer a family of 200Gb/s copper and silicon photonics fibre cables.